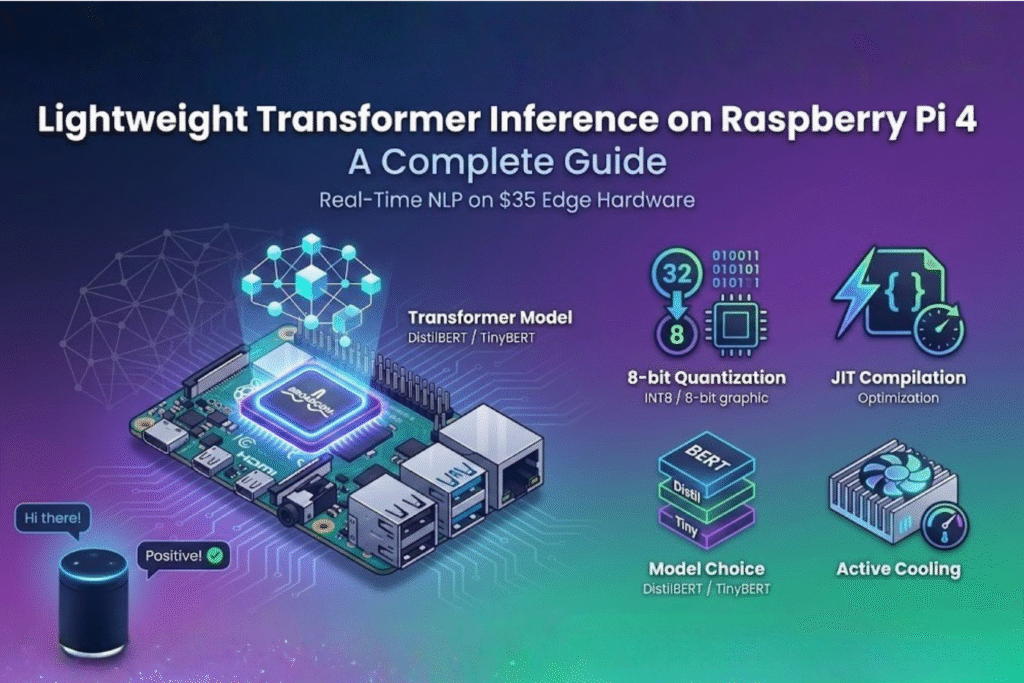

Lightweight Transformer Inference on Raspberry Pi 4

The evolution of edge computing has brought sophisticated AI capabilities to affordable hardware. Among the most exciting developments is lightweight transformer inference on Raspberry Pi 4, which enables natural language processing directly on a $35-75 device. This guide explores how to achieve real-time performance with transformer models on constrained hardware. Understanding the Hardware Challenge The Raspberry Pi 4, available in 2GB, 4GB, and 8GB RAM variants, provides ARM Cortex-A72 cores capable of handling optimized neural networks. However, transformer inference on Raspberry Pi 4 presents unique challenges. The ARM-based architecture with four cores running at 1.5 GHz excels at power efficiency rather than raw computational throughput. For lightweight transformer inference on the Raspberry Pi 4 tasks, memory bandwidth becomes the primary bottleneck. The LPDDR4 RAM operates at lower speeds than desktop counterparts, making memory-intensive operations like attention mechanisms particularly challenging. This is why optimization techniques are essential for successful transformer inference on Raspberry Pi 4 implementations. The lack of dedicated neural processing units means all computation happens on the CPU. However, ARM’s NEON SIMD instructions provide vectorization capabilities that frameworks can exploit to accelerate lightweight transformer inference on Raspberry Pi 4 operations. Essential Setup for Success Setting up your system correctly makes a dramatic difference in transformer inference on the Raspberry Pi 4 performance. First, install a 64-bit operating system. The aarch64 architecture provides access to optimized libraries that can double performance compared to 32-bit systems for lightweight transformer inference on Raspberry Pi 4 applications. Thermal management cannot be overlooked when running transformer inference on the Raspberry Pi 4. Sustained inference workloads generate significant heat. Without adequate cooling, the Pi 4 will throttle performance, reducing your model’s speed by 20-40%. Heat sinks and active cooling fans are recommended for any serious lightweight transformer inference on Raspberry Pi 4 deployment. Configure your boot settings to allocate appropriate GPU memory if you’re combining vision tasks with language processing. While the Pi’s GPU won’t accelerate transformer computations directly, proper memory allocation ensures system stability during transformer inference on Raspberry Pi 4 operations. Power supply quality matters more than many developers realize. Insufficient power causes voltage drops under load, leading to system instability during intensive lightweight transformer inference on the Raspberry Pi 4 operations. Use a quality 5V 3A USB-C power supply for reliable performance. Framework Selection Choosing the right deep learning framework significantly impacts lightweight transformer inference on Raspberry Pi 4 performance. PyTorch provides excellent ARM support with the qnnpack backend, specifically designed for quantized neural networks on mobile and embedded devices. The framework can be installed via pip and works seamlessly on 64-bit Raspberry Pi OS. TensorFlow Lite offers another excellent option with comprehensive ARM optimizations and smaller deployment footprints ideal for transformer inference on Raspberry Pi 4 scenarios. Its delegate system allows hardware-specific accelerations that improve performance. ONNX Runtime has emerged as a compelling choice for transformer inference on the Raspberry Pi 4 due to its aggressive graph optimizations and broad model format support. It can automatically apply operator fusion, constant folding, and layout transformations that dramatically improve performance for lightweight transformer inference on the Raspberry Pi 4. Model Architecture Selection Not all transformer architectures are equally suited for transformer inference on Raspberry Pi 4. Traditional BERT models with 110M parameters prove too large for comfortable real-time inference. This is where distilled variants become essential for lightweight transformer inference on Raspberry Pi 4 applications. DistilBERT reduces the original BERT’s size by 40% while retaining 97% of its language understanding capabilities. This makes it an excellent starting point for transformer inference on the Raspberry Pi 4. With 66M parameters, it strikes a balance between capability and efficiency, making it ideal for sentiment analysis, text classification, and question answering tasks. TinyBERT pushes compression even further, achieving 7.5x smaller size through aggressive knowledge distillation. For resource-constrained lightweight transformer inference on Raspberry Pi 4 scenarios, TinyBERT delivers impressive results with only 14.5M parameters while maintaining strong performance on downstream tasks. MobileBERT, designed specifically for mobile and edge devices, employs bottleneck structures and inverted-bottleneck attention mechanisms. Its architecture is optimized for the exact scenarios encountered in transformer inference on Raspberry Pi 4 deployments, providing excellent latency-accuracy trade-offs. ALBERT (A Lite BERT) uses parameter sharing across layers to reduce model size significantly. This makes it another strong candidate for lightweight transformer inference on the Raspberry Pi 4, especially when you need larger model capacity without proportional memory increases. Quantization: The Performance Multiplier Quantization is the single most impactful optimization for lightweight transformer inference on the Raspberry Pi 4. Converting 32-bit floating-point weights to 8-bit integers reduces model size by 4x and dramatically accelerates computation through INT8 arithmetic units available in ARM processors. For transformer inference on Raspberry Pi 4, dynamic quantization offers the best trade-off. Weights are stored as INT8, while activations are computed in floating-point and dynamically quantized. This approach maintains accuracy while achieving 2-4x speedups for lightweight transformer inference on the Raspberry Pi 4. Post-training quantization requires no retraining, making it ideal for quick transformer inference on Raspberry Pi 4 deployments. However, quantization-aware training yields even better results if you’re training custom models specifically for lightweight transformer inference on the Raspberry Pi 4 applications. The qnnpack backend, specifically designed for ARM processors, enables these quantization benefits. Activating qnnpack is essential for achieving optimal performance in transformer inference on the Raspberry Pi 4 scenarios. JIT Compilation Benefits Just-In-Time compilation transforms Python code into optimized intermediate representations that execute faster. For transformer inference on the Raspberry Pi 4, JIT can provide 1.5-2x speedups by eliminating Python interpreter overhead and enabling operation fusion. Script mode in PyTorch allows the entire model to be compiled ahead of time, which can increase lightweight transformer inference on Raspberry Pi 4 throughput from 20 frames per second to 30 fps by fusing operations and optimizing execution graphs. When implementing transformer inference on Raspberry Pi 4 with JIT, test thoroughly. Some dynamic Python behaviors don’t translate well to scripted code, potentially causing runtime errors in your lightweight transformer inference on the Raspberry Pi