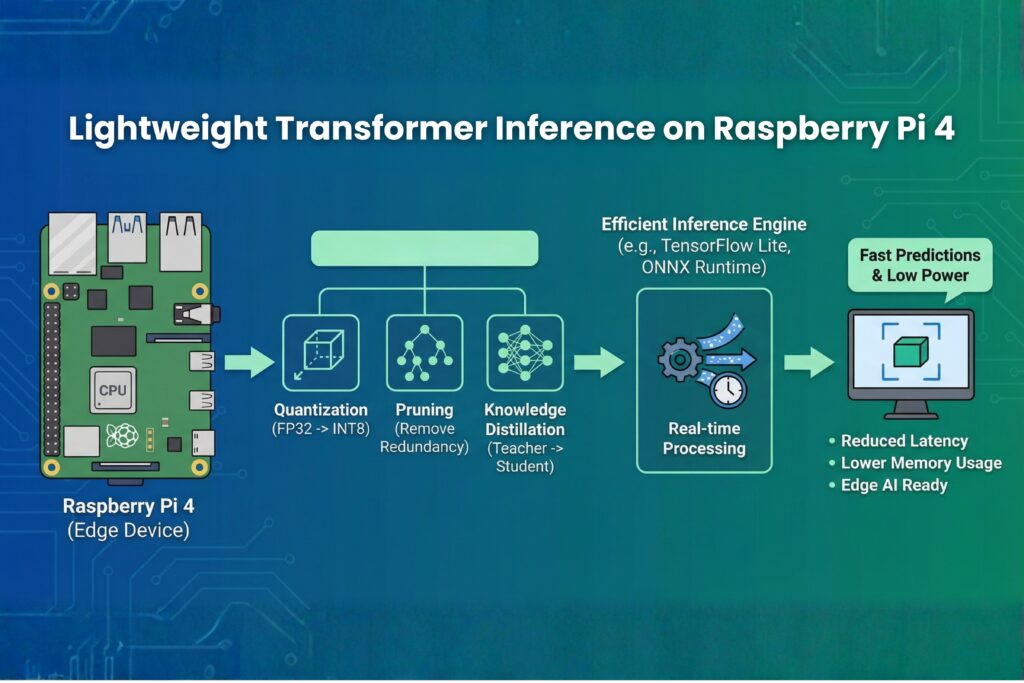

The evolution of edge computing has brought sophisticated AI capabilities to affordable hardware. Among the most exciting developments is lightweight transformer inference on Raspberry Pi 4, which enables natural language processing directly on a $35-75 device. This guide explores how to achieve real-time performance with transformer models on constrained hardware.

Understanding the Hardware Challenge

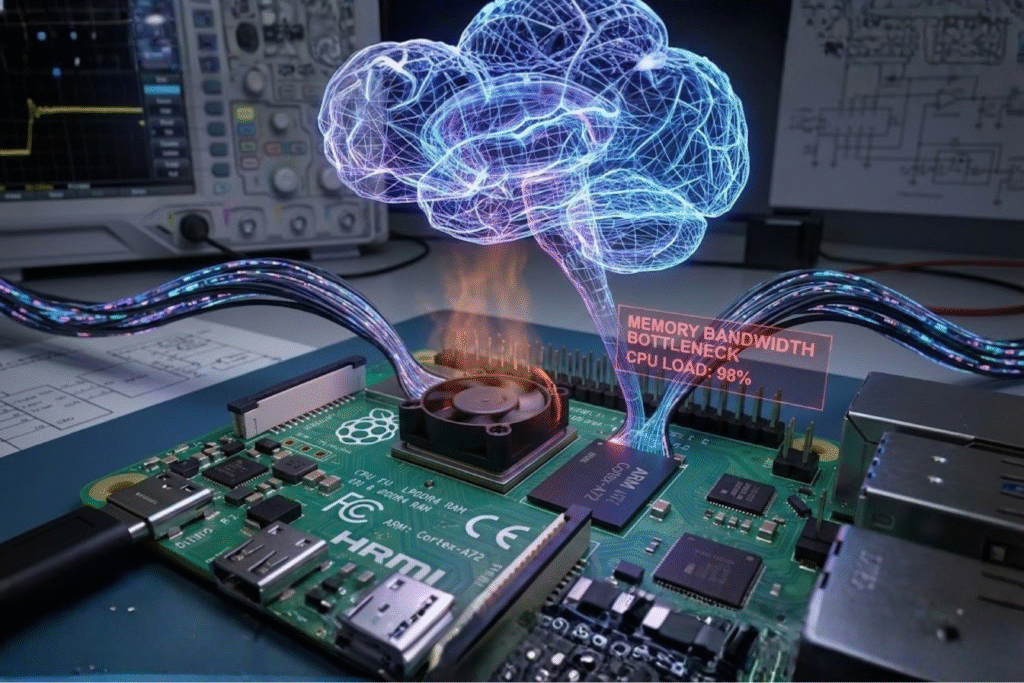

The Raspberry Pi 4, available in 2GB, 4GB, and 8GB RAM variants, provides ARM Cortex-A72 cores capable of handling optimized neural networks. However, transformer inference on Raspberry Pi 4 presents unique challenges. The ARM-based architecture with four cores running at 1.5 GHz excels at power efficiency rather than raw computational throughput.

For lightweight transformer inference on the Raspberry Pi 4 tasks, memory bandwidth becomes the primary bottleneck. The LPDDR4 RAM operates at lower speeds than desktop counterparts, making memory-intensive operations like attention mechanisms particularly challenging. This is why optimization techniques are essential for successful transformer inference on Raspberry Pi 4 implementations.

The lack of dedicated neural processing units means all computation happens on the CPU. However, ARM’s NEON SIMD instructions provide vectorization capabilities that frameworks can exploit to accelerate lightweight transformer inference on Raspberry Pi 4 operations.

Essential Setup for Success

Setting up your system correctly makes a dramatic difference in transformer inference on the Raspberry Pi 4 performance. First, install a 64-bit operating system. The aarch64 architecture provides access to optimized libraries that can double performance compared to 32-bit systems for lightweight transformer inference on Raspberry Pi 4 applications.

Thermal management cannot be overlooked when running transformer inference on the Raspberry Pi 4. Sustained inference workloads generate significant heat. Without adequate cooling, the Pi 4 will throttle performance, reducing your model’s speed by 20-40%. Heat sinks and active cooling fans are recommended for any serious lightweight transformer inference on Raspberry Pi 4 deployment.

Configure your boot settings to allocate appropriate GPU memory if you’re combining vision tasks with language processing. While the Pi’s GPU won’t accelerate transformer computations directly, proper memory allocation ensures system stability during transformer inference on Raspberry Pi 4 operations.

Power supply quality matters more than many developers realize. Insufficient power causes voltage drops under load, leading to system instability during intensive lightweight transformer inference on the Raspberry Pi 4 operations. Use a quality 5V 3A USB-C power supply for reliable performance.

Framework Selection

Choosing the right deep learning framework significantly impacts lightweight transformer inference on Raspberry Pi 4 performance. PyTorch provides excellent ARM support with the qnnpack backend, specifically designed for quantized neural networks on mobile and embedded devices. The framework can be installed via pip and works seamlessly on 64-bit Raspberry Pi OS.

TensorFlow Lite offers another excellent option with comprehensive ARM optimizations and smaller deployment footprints ideal for transformer inference on Raspberry Pi 4 scenarios. Its delegate system allows hardware-specific accelerations that improve performance.

ONNX Runtime has emerged as a compelling choice for transformer inference on the Raspberry Pi 4 due to its aggressive graph optimizations and broad model format support. It can automatically apply operator fusion, constant folding, and layout transformations that dramatically improve performance for lightweight transformer inference on the Raspberry Pi 4.

Model Architecture Selection

Not all transformer architectures are equally suited for transformer inference on Raspberry Pi 4. Traditional BERT models with 110M parameters prove too large for comfortable real-time inference. This is where distilled variants become essential for lightweight transformer inference on Raspberry Pi 4 applications.

DistilBERT reduces the original BERT’s size by 40% while retaining 97% of its language understanding capabilities. This makes it an excellent starting point for transformer inference on the Raspberry Pi 4. With 66M parameters, it strikes a balance between capability and efficiency, making it ideal for sentiment analysis, text classification, and question answering tasks.

TinyBERT pushes compression even further, achieving 7.5x smaller size through aggressive knowledge distillation. For resource-constrained lightweight transformer inference on Raspberry Pi 4 scenarios, TinyBERT delivers impressive results with only 14.5M parameters while maintaining strong performance on downstream tasks.

MobileBERT, designed specifically for mobile and edge devices, employs bottleneck structures and inverted-bottleneck attention mechanisms. Its architecture is optimized for the exact scenarios encountered in transformer inference on Raspberry Pi 4 deployments, providing excellent latency-accuracy trade-offs.

ALBERT (A Lite BERT) uses parameter sharing across layers to reduce model size significantly. This makes it another strong candidate for lightweight transformer inference on the Raspberry Pi 4, especially when you need larger model capacity without proportional memory increases.

Quantization: The Performance Multiplier

Quantization is the single most impactful optimization for lightweight transformer inference on the Raspberry Pi 4. Converting 32-bit floating-point weights to 8-bit integers reduces model size by 4x and dramatically accelerates computation through INT8 arithmetic units available in ARM processors.

For transformer inference on Raspberry Pi 4, dynamic quantization offers the best trade-off. Weights are stored as INT8, while activations are computed in floating-point and dynamically quantized. This approach maintains accuracy while achieving 2-4x speedups for lightweight transformer inference on the Raspberry Pi 4.

Post-training quantization requires no retraining, making it ideal for quick transformer inference on Raspberry Pi 4 deployments. However, quantization-aware training yields even better results if you’re training custom models specifically for lightweight transformer inference on the Raspberry Pi 4 applications.

The qnnpack backend, specifically designed for ARM processors, enables these quantization benefits. Activating qnnpack is essential for achieving optimal performance in transformer inference on the Raspberry Pi 4 scenarios.

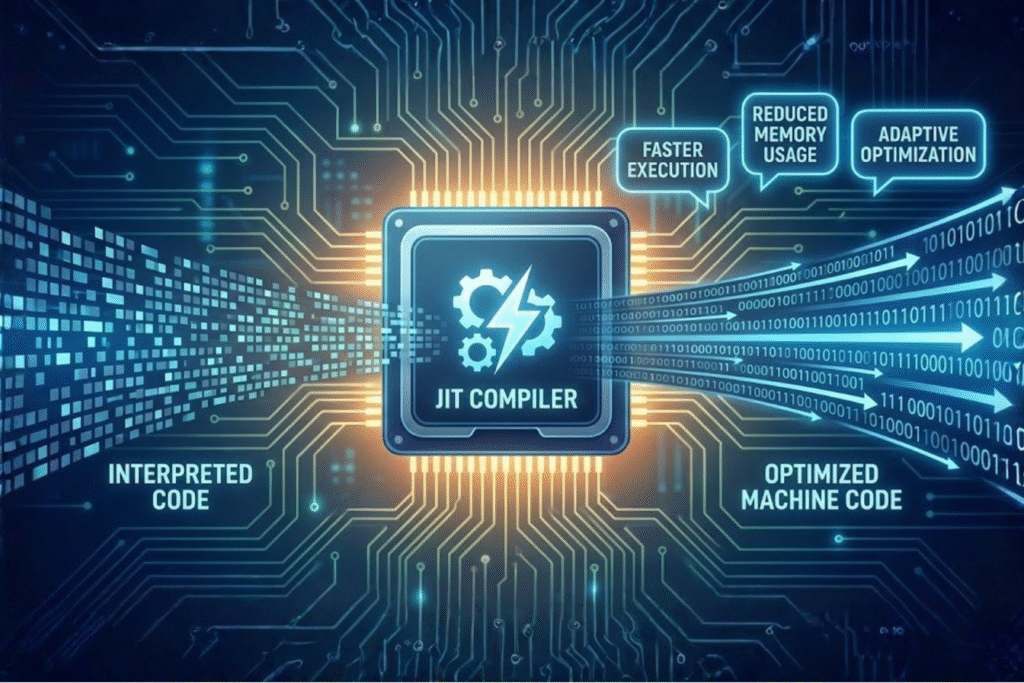

JIT Compilation Benefits

Just-In-Time compilation transforms Python code into optimized intermediate representations that execute faster. For transformer inference on the Raspberry Pi 4, JIT can provide 1.5-2x speedups by eliminating Python interpreter overhead and enabling operation fusion.

Script mode in PyTorch allows the entire model to be compiled ahead of time, which can increase lightweight transformer inference on Raspberry Pi 4 throughput from 20 frames per second to 30 fps by fusing operations and optimizing execution graphs.

When implementing transformer inference on Raspberry Pi 4 with JIT, test thoroughly. Some dynamic Python behaviors don’t translate well to scripted code, potentially causing runtime errors in your lightweight transformer inference on the Raspberry Pi 4 application.

Real-World Performance Benchmarks

Understanding realistic performance expectations is crucial for transformer inference on the Raspberry Pi 4 planning. Based on extensive testing with various models on the Pi 4, here are representative performance metrics:

DistilBERT with quantization and JIT compilation achieves 250-350ms per inference for sequence length 128, and 150-200ms for sequence length 64, with memory usage around 400MB. This makes lightweight transformer inference on Raspberry Pi 4 practical for many real-time applications.

TinyBERT with the same optimizations delivers 120-180ms per inference for sequence length 128, and 80-120ms for sequence length 64, while consuming only 200MB of memory. This represents some of the fastest transformer inference on the Raspberry Pi 4 performance currently achievable.

MobileBERT achieves 200-300ms per inference for sequence length 128, and 120-180ms for sequence length 64, using approximately 350MB of memory. These benchmarks show that lightweight transformer inference on Raspberry Pi 4 can achieve near-real-time performance for many NLP tasks.

For comparison, unoptimized models can take 2-5 seconds per inference, demonstrating the critical importance of optimization techniques for practical transformer inference on Raspberry Pi 4 applications.

Memory Optimization Strategies

Memory management often determines success or failure in lightweight transformer inference on Raspberry Pi 4 projects. The 8GB RAM variant provides the most headroom, but even 4GB models can handle optimized transformers with careful planning.

Using no-gradient mode during inference prevents gradient computation, which significantly reduces memory overhead for transformer inference on the Raspberry Pi 4. This single change can reduce memory consumption by 40-60%, making the difference between a model that fits and one that doesn’t.

Implement model weight sharing if running multiple inference passes. Loading the model once and keeping it resident in memory amortizes the loading cost across requests, critical for responsive lightweight transformer inference on the Raspberry Pi 4 applications.

Clear the cache between inferences to prevent memory leaks. Python’s garbage collector may not immediately free memory, causing gradual degradation in transformer inference on Raspberry Pi 4 performance over extended operation periods.

Thread Configuration and CPU Management

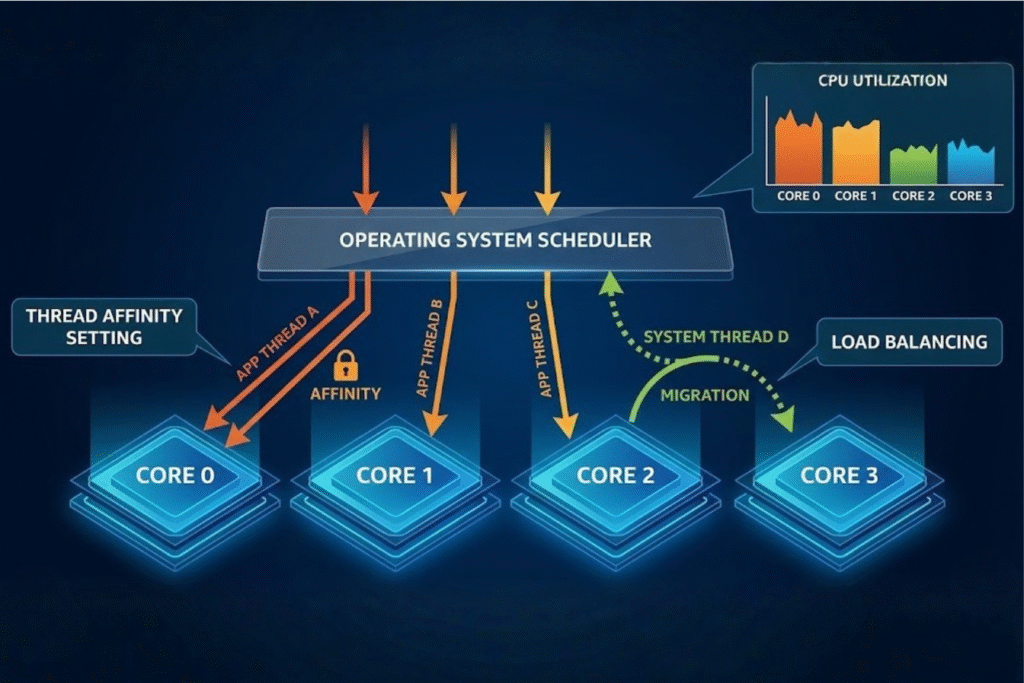

PyTorch automatically uses all available CPU cores, which may not be optimal for transformer inference on the Raspberry Pi 4. Background services and system processes can cause thread contention, resulting in latency spikes that affect user experience.

For lightweight transformer inference on Raspberry Pi 4 applications requiring consistent latency, using 2-3 threads instead of 4 reduces peak-to-peak latency variance by 50-70% while sacrificing only 10-15% throughput. This trade-off often results in a better user experience.

CPU affinity can further optimize transformer inference on the Raspberry Pi 4 by binding processes to specific cores, preventing costly context switches. This is particularly valuable in multi-process deployments where you’re running both model inference and other services simultaneously.

Practical Applications and Use Cases

Lightweight transformer inference on Raspberry Pi 4 enables numerous compelling applications across various domains. Edge-based sentiment analysis systems can process customer feedback locally, maintaining privacy while providing real-time insights without cloud dependencies or network latency.

Smart home voice assistants benefit tremendously from transformer inference on Raspberry Pi 4 capabilities. Processing natural language commands locally eliminates cloud latency and privacy concerns, creating more responsive and trustworthy systems that work even without internet connectivity.

Industrial IoT deployments leverage lightweight transformer inference on the Raspberry Pi 4 for anomaly detection in log files, predictive maintenance through text analysis, and quality control systems that classify defects based on descriptions. The low cost and power consumption make large-scale deployments economically viable.

Educational robotics projects utilize transformer inference on Raspberry Pi 4 to add natural language understanding to autonomous systems. Students learn practical AI deployment while building sophisticated projects on accessible hardware without expensive GPU workstations.

Thermal Management Considerations

Sustained transformer inference on the Raspberry Pi 4 generates substantial heat, potentially causing thermal throttling that reduces performance by 20-40%. Active cooling maintains consistent performance across extended operation periods, which is essential for production deployments.

Monitor CPU temperature during lightweight transformer inference on Raspberry Pi 4 development and deployment. If temperatures exceed 70°C regularly, inference performance will suffer. Adequate ventilation, heat sinks, or active cooling fans keep temperatures at optimal levels for transformer inference on the Raspberry Pi 4 workloads.

Consider the ambient environment when deploying lightweight transformer inference on Raspberry Pi 4 systems. Enclosed spaces without airflow will cause faster thermal buildup, while well-ventilated areas maintain better performance.

Troubleshooting Common Issues

Out-of-memory errors plague many transformer inference on Raspberry Pi 4 implementations. Reduce batch size to one, decrease maximum sequence length, or switch to a smaller model. The 8GB Pi 4 variant provides significant headroom over the 4GB version for lightweight transformer inference on the Raspberry Pi 4.

Slow initial inference times result from model loading and JIT compilation. For responsive transformer inference on Raspberry Pi 4 applications, keep models loaded in memory and run warm-up inferences during initialization to ensure everything is compiled and cached.

Inconsistent latency often stems from thermal throttling or thread contention. Monitor temperatures and limit thread count as described earlier to stabilize lightweight transformer inference on the Raspberry Pi 4 performance across sustained operations.

Getting Started with Your Project

Begin your lightweight transformer inference on Raspberry Pi 4 journey with a simple sentiment analysis project. Use a pre-trained DistilBERT model from Hugging Face, apply quantization, and measure performance before optimizing further.

Document your optimization journey with transformer inference on the Raspberry Pi 4. Track inference times, memory usage, accuracy metrics, and temperature readings. This data informs decisions about trade-offs between model size and performance in your lightweight transformer inference on the Raspberry Pi 4 application.

Join communities focused on edge AI and transformer inference on Raspberry Pi 4. GitHub repositories, Discord servers, and forums contain valuable insights from developers who’ve solved similar challenges with lightweight transformer inference on the Raspberry Pi 4.

Conclusion

Lightweight transformer inference on Raspberry Pi 4 transforms natural language processing from a cloud-dependent service into an edge capability. Through quantization, JIT compilation, careful model selection, and optimization techniques, you can achieve impressive performance on affordable hardware. Whether building educational projects, privacy-focused voice assistants, or industrial IoT solutions, lightweight transformer inference on the Raspberry Pi 4 provides powerful capabilities previously available only to those with expensive cloud infrastructure.